(Image found here: http://en.wikipedia.org/wiki/File:Salachinesa2.png )

Last week I talked about the Turing Test, which suggests that if a computer can carry on a conversation with a person (via some text-based chat program) and the person can’t tell whether they’re talking to another human or a machine, then the machine may be considered intelligent.

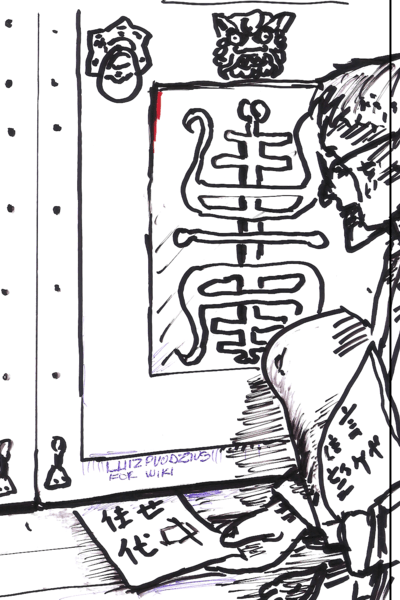

One of the main objections to the Turing Test is the so-called Chinese Room argument. The Chinese Room is a thought experiment invented by John Searle to show that, even if a digital computer could pass the Turing Test, it still would not understand its own words, and thus should not be considered intelligent.

The argument goes like this. A digital computer (by which I just mean any ordinary computer, like your PC) can do several things. It can receive input; it can follow a long list of instructions (the program code) that tell it what to do with its input; it can read and write to internal memory; and it can send output based on these internal computations. Any digital computer that passes the Turing Test will simply be doing these basic things in a particularly complicated way.

So, the argument continues, let’s imagine replacing the computer with a man in a room. The computer’s goal is to pass the Turing Test in Chinese. Someone outside passes in slips of paper with Chinese characters, and the man must pass back slips of paper with responses in Chinese. But the man himself knows no Chinese, only English. However, the room contains an enormous library of books, full of instructions on how to handle any Chinese characters. For any message he receives, he looks up the characters in his library and follows the instructions (in English) on how to compose a response. He also has a pencil and paper he can use to write notes, do figuring as necessary, and erase notes, as instructed by his books. Once he has his answer, he writes it down and passes it back.

Here, the library of books corresponds to the computer’s program code, the pencil and paper corresponds to internal memory, and the person (who understands English but not Chinese) corresponds to the hardware that executes the program (which understands program instruction codes but not human language).

Searle points out that, although the man in the Chinese Room can theoretically carry on a perfectly good conversation in Chinese, there is nothing in the room (neither the man nor the books) that can be said to understand Chinese. Therefore, the Chinese Room as a whole can act as if it understands Chinese, but it doesn’t really. In the same way, a digital computer can act as if it’s thinking, but it isn’t really. The computer is only manipulating symbols, which have no meaning to the computer; it can never understand what it is doing. Real understanding requires an entirely different kind of hardware – like the kind in the human brain, made up of biological neurons – which a digital computer simply does not possess.

In fact, says Searle, even if a digital computer were to precisely simulate a human brain, neuron by neuron, and function correctly in just the same way, it still would not understand what it was doing in the way that a human brain does. This follows, he says, merely as a special case of the general Chinese Room argument.

I have my own opinion on the Chinese Room argument, which I’ll give tomorrow. In the meantime, what do you think? Is his argument convincing?

One of my objections is the assumption that the man in the room will remain unable to understand Chinese.

I submit that if he followed the instructions and carried out a reasonable number of conversations he would quickly develop some level of understanding. Maybe just fragments or words or patterns.

In fact, really, what is the difference between this and the language learning process?

You can learn Chinese from a book (but you’ll have trouble with the tones) and over time you begin to internalize it.

This has further implications about things like our extended capability through out sourcing mental function. If I have a to-do list and an evernote account and an address book where is the hard limit to my memory. I remember where I left my phone. That is enough.

Have to disagree with you here. The man in the room may or may not learn Chinese eventually, but if he does, that merely means that the analogy with a digital computer no longer applies. A computer processor never learns English, no matter how long it may simulate an English-speaking AI.

If you like, imagine the man in the room has some sort of disease that prevents him from forming new memories, and all he can do is follow the instructions in the books. That may be a clearer way of viewing Searle’s argument.

1

1*414*409*0

1+419-414-5

1*572*567*0

1+577-572-5

1*232*227*0

1+237-232-5

1*527*522*0

1+532-527-5

1*551*546*0

1*435*430*0

1+556-551-5

1+440-435-5

1*965*960*0

1*808*803*0

1+970-965-5

1+813-808-5

1*118*113*0

1*815*810*0

1+123-118-5

1+820-815-5

1*389*384*0

1+394-389-5

1*392*387*0

1+397-392-5