For a long time, my AI strategy has been:

- First, figure out the AI’s knowledge structure – the way knowledge is stored inside its mind. You’d think this would be easy, but the problem of knowledge representation turns out to be nontrivial (much like the Pacific Ocean turns out to be non-dry).

- Once I know how to represent knowledge, I will begin work on knowledge acquisition, or learning.

To me, this order made sense. A mind must have a framework for storing information before you can help it learn new information.

Right?

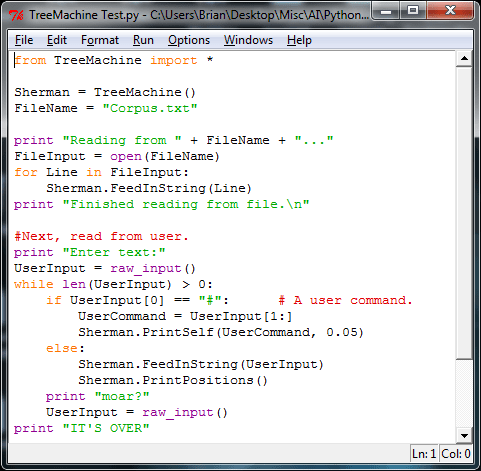

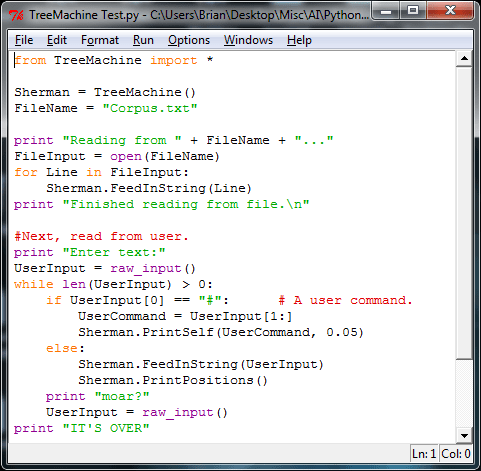

Well, for the past week, I’ve tackled the problem from the opposite direction. I’ve pushed aside my 5,000+ lines of old code (for the moment) and started from scratch, building an algorithm that’s focused on learning.

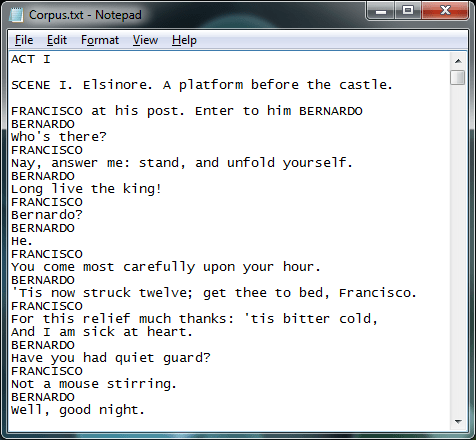

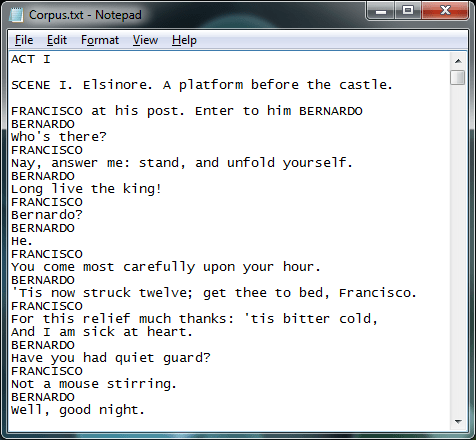

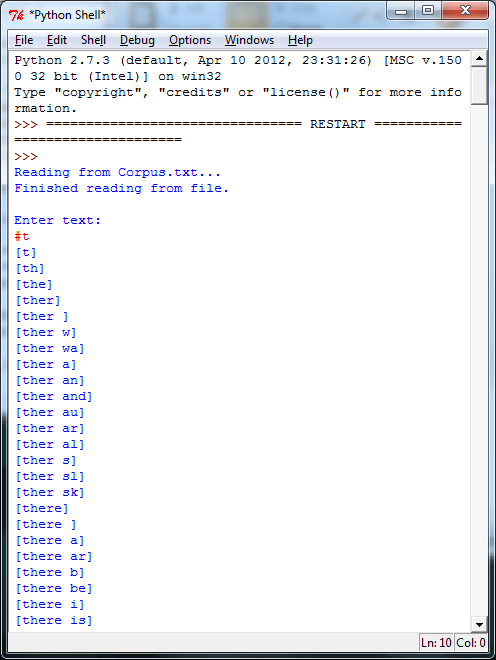

The result is a little program (less than 200 lines long) that reads in a text file and searches for patterns, with no preconceived notions about what constitutes a word, a punctuation mark, a consonant, or a vowel. For instance:

This AI-in-training makes short work of Hamlet, plowing through the Bard’s masterpiece in about ten seconds. The result is a meticulous, stats-based tree of patterns. I can examine any particular branch that starts with any letter or letters I like.

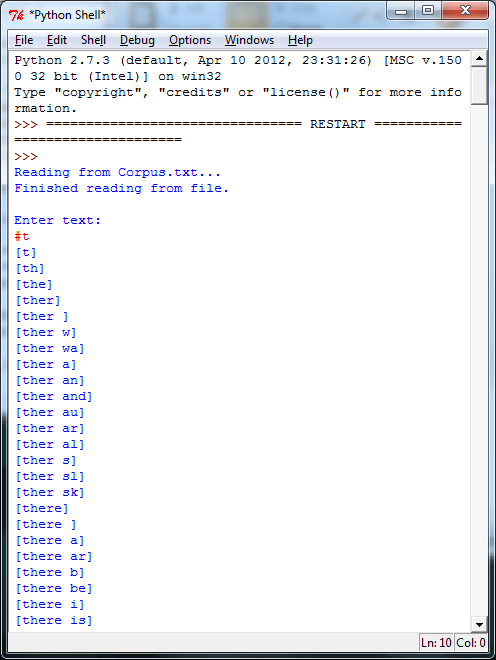

Here I’m looking at all the patterns it found, that start with “t”:

The full list is much longer, but already you can see it’s picked up some interesting patterns. It’s noticed “the” and “there”, and it’s noticed that both are often followed by a space. It’s even started picking out which letters most commonly start the next word. And it’s noticed a pattern of words ending in “ther”, presumably “mother”, “father”, “together”, “rather”, and their kin.

This algorithm is cool, but rather limited at the moment. It can notice correlations between letters, and fairly simple strings, but it doesn’t do well with more complex patterns. I won’t bore you with the details, but rest assured I’m working on it.

In the meantime, AI is fun again. I mean, it was mostly fun before, but I was entering a dry spell where the work had started feeling like a chore. Every now and then, a fresh perspective helps get you excited again.

In this case, it also showed me that I had my strategy backwards. Just as you can’t build an ontology and weld on input/output later, it turns out likewise that you can’t build an ontology and weld on learning. Learning, it appears, must come first. The how determines the what.

And this new direction is fun for another reason, too.

Till recently, I’d been coding in C++. Now C++ is a white-bearded, venerable patriarch of a language: time-tested, powerful, respected by all. But it’s also a grumpy old man who complains mightily about syntax and insists you spend hours telling it exactly what you want to do.

This new stuff, on the other hand, I’m coding in Python. Python is a newer language, not as bare-bones efficient as C++ but a hell of a lot simpler from the programmer’s point of view, shiny and full of features and full of magic. And I’m new to Python myself, so I’m still in the honeymoon phase. I’m not saying one or the other is “better” overall, but right now, Python is a lot more fun.

And really, programming ought to be fun. Especially if you’re building a mind.